This post was contributed by David Mellor from the Center for Open Science.

In the last decade, researchers have brought issues in reproducible research to the forefront in the so-called “reproducibility crisis.” Results in preclinical, biomedical and psychological sciences were called into question after credible attempts to replicate major findings could not be replicated by other researchers.There is both theoretical and empirical evidence (in psychology, cancer biology, pre-clinical life science work, economics) that published research is difficult to replicate.

Why is replicating previous research findings so difficult to do?

There are four reasons that research can be difficult to replicate.

Unclear methods

Until someone attempts to replicate the results of another’s work, it may be impossible to determine precisely how the methods were implemented. We run into this problem frequently over the course of the Reproducibility Project: Cancer Biology, where reported methods may not include how many tests were conducted on how the results were analyzed. A casual reading of the study is not enough to uncover these omissions, so it is unlikely to be caught during the peer review process, which often focuses more attention on the results instead of the methods.

Unreported flexibility and questionable research practices

In an attempt to present only the most novel or significant findings, and combined with powerful cognitive biases such as motivated reasoning and hindsight bias, we may fool ourselves and our readers into believing the results are more credible than they actually are. Throwing out plates that don’t respond as expected, use of representative images, measuring the main outcomes in several different ways, and other questionable research practices (QRPs) can quickly lead us to find statistically significant results that are not robust.

Sampling noise

There is, of course, simple noise in the process of sampling and inference-making. Even perfect transparent and rigorous studies will give false signals from time to time. False positives in original studies and false negatives in replications are unavoidable, but can be minimized with high powered research designs.

Unanticipated discoveries

Finally, there are good reasons for obtaining a different result after conducting a replication study. An important difference between the original setting and the new one, previously unaccounted for, may lead to different results. Different setting, different population, new genetic variation, or different handling can all be important.

If we eliminate the problems above: unclear methods, QRPs, and small samples, then when replications do not come out the same as an original research finding we can be more certain that some important, previously unknown variable actually makes a big difference for the robustness of the findings.

How do we make research more reproducible?

Combat unclear methods with open data, materials, and code

Only when you try to replicate the work of other researchers (or your own from years ago!) do you really appreciate how few details are provided in most published papers. The precise timing, order, or every combination of materials and treatments is all too often glossed over. This is why it is important to be able to look under the hood at the raw data, analysis code, and other materials. The use of reporting guidelines can also help remind us to include all of the essential details. These items rarely exist or are preserved as part of the publishing process and become lost if not archived.

Remove bias with preregistration

Preregistration is the process of creating a time-stamped research plan in a public repository before conducting the experiment (note: registrations can be embargoed while the research is ongoing, but all registries do require that research plans become public eventually). It is typically done right before data collection starts, and should include a specific plan for how data will be collected, what the hypotheses are, and how each hypothesis will be tested with a specific statistical test.

Preregistration makes clear the distinction between hypothesis testing work, in which a-priori hypotheses are tested with a new experiment; and hypothesis generation, in which data are investigated to look for unexpected trends or differences between groups. Hypothesis generation is critical for advancement, but we are too often encouraged to present that work using hypothesis testing statistical tools that generate a p-value.

As an example, if you hypothesize that growth rates will be higher in treatment versus control, and you think there are four important times to measure that growth rate, you report the results of all four of those tests and not just the subset of those that return a particular result. Anything else that occurs during the course of the study, say a fifth time has unpredictably high rates, should be reported as a new discovery that deserves confirmation with new data and a new, preregistered experiment.

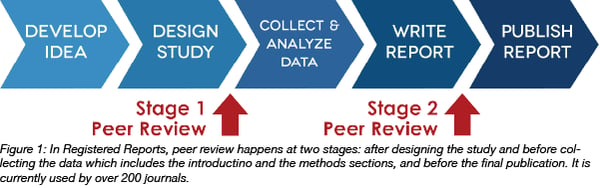

Reduce sampling noise with registered reports, the gold standard for testing hypotheses

Finally, Registered Reports combine all the best practices of Open Science. With a Registered Report, peer review occurs before results are known. The author submits the research plan (similar to a preregistration, but usually with a fully fleshed out introduction section) to one of 222 participating journals before a study is conducted. Editors and reviewers recommend changes, and if everyone agrees that the research questions are important enough to be answered and the methods are appropriate to answer those questions, the article is given “in-principle acceptance” to publish the final results regardless of the outcome of the study. That way, the author is not left in the uncomfortable position of having robust, null findings that are notoriously difficult to publish.

With Registered Reports, the authors are able to make that clear distinction between hypothesis testing and generation, can be sure that results will be reported regardless of outcome, and are able to receive feedback on their study plans at a point in the research lifecycle when suggestions can be incorporated into the proposed experiment. Furthermore, the journal and reviewers are incentivized to make sure that any possible null is really a true null and not a false negative. Therefore, they will place special emphasis on positive controls and good sample sizes, concerns that are too easy to overlook when presented with a significant result that may be the result of noise, p-hacking, or unclear methods.

With Registered Reports, the authors are able to make that clear distinction between hypothesis testing and generation, can be sure that results will be reported regardless of outcome, and are able to receive feedback on their study plans at a point in the research lifecycle when suggestions can be incorporated into the proposed experiment. Furthermore, the journal and reviewers are incentivized to make sure that any possible null is really a true null and not a false negative. Therefore, they will place special emphasis on positive controls and good sample sizes, concerns that are too easy to overlook when presented with a significant result that may be the result of noise, p-hacking, or unclear methods.

Many thanks to our guest blogger David Mellor!

David Mellor leads the policy and incentive programs at the Center for Open Science in order to reward increased transparency and reduced bias in scientific research. These include policies for publishers and funders in the Transparency and Openness Promotion Guidelines; preregistration to increase clarity in study design and analysis; removing publication bias with Registered Reports; and recognizing increased transparency with badges.

David Mellor leads the policy and incentive programs at the Center for Open Science in order to reward increased transparency and reduced bias in scientific research. These include policies for publishers and funders in the Transparency and Openness Promotion Guidelines; preregistration to increase clarity in study design and analysis; removing publication bias with Registered Reports; and recognizing increased transparency with badges.

Before coming to the Center for Open Science, Dr. Mellor worked with citizen scientists to design and implement authentic scientific research and as the Director of Advising in the Division of Life Sciences at Rutgers University. His dissertation and research background is in citizen science and behavioral ecology of cichlid fishes. Find David online at https://orcid.org/0000-0002-3125-5888 and @EvoMellor.

Topics: Scientific Sharing, Reproducibility

Leave a Comment